Apache Beam - Create Data Processing Pipelines

One major data engineering problem is to find the right tools and programming models to build both robust data processing pipelines and efficient ETL processes for data transformation and integration.

Beam (incubating) attempts to solve this problem by providing a unified programming model to create data processing pipelines. The Apache Beam open source project is currently in incubation mode and we invite you to join the community and pitch in to help build.

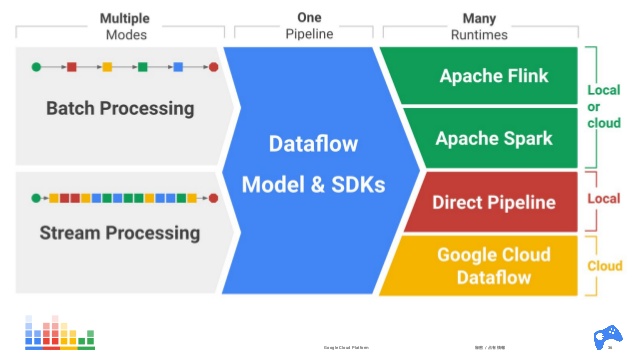

You start by building a program that defines the pipeline using one of the open source Beam SDKs. The pipeline is then executed by one of Beam’s supported distributed processing back-ends, which include Apache Flink, Apache Spark, and Google Cloud Dataflow.

Beam is particularly useful for Embarrassingly Parallel data processing tasks, in which the problem can be decomposed into many smaller bundles of data that can be processed independently and in parallel. You can also use Beam for Extract, Transform, and Load (ETL) tasks and pure data integration. These tasks are useful for moving data between different storage media and data sources, transforming data into a more desirable format, or loading data onto a new system.

Apache Beam SDKs

The Beam SDKs provide a unified programming model that can represent and transform data sets of any size, whether the input is a finite data set from a batch data source, or an infinite data set from a streaming data source. The Beam SDKs use the same classes to represent both bounded and unbounded data, and the same transforms to operate on that data. You use the Beam SDK of your choice to build a program that defines your data processing pipeline.

See: http://beam.incubator.apache.org/